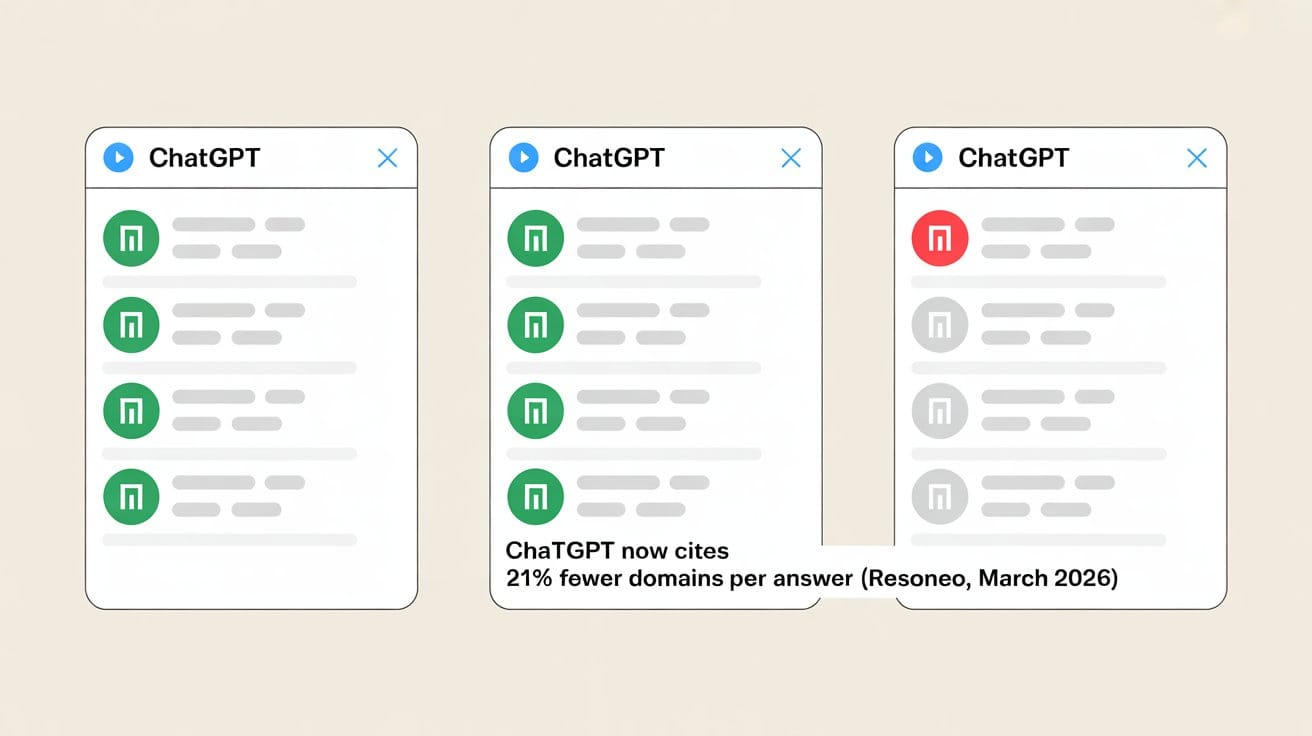

Between January and March 2026, the average number of unique domains ChatGPT cited per response dropped from 19 to 15 — a 21% decline tracked across 27,000 responses by French SEO consultancy Resoneo. The citation pool is actively contracting.

The firms already named are winning more of each answer

After ChatGPT upgraded to GPT-5.3 Instant in March, fewer domains share each answer. A competitor whose name already appears when someone asks "financial planner South Yarra" doesn't just maintain their position. They now occupy a proportionally larger share of a smaller field.

This matters because of what kind of lead follows a ChatGPT answer. CallRail's 2026 attribution data shows ChatGPT drives 90.1% of AI-referred enquiries. Those leads arrive decision-ready. The AI already compared providers on their behalf. By the time someone picks up the phone, they've decided who to call.

What to do right now: Open ChatGPT and run: "financial planner [your suburb]", "financial planner [your specialisation] Melbourne", and "[your firm name] financial advice." If you're not named in at least two of three, you have a gap and it's narrowing faster than it was 90 days ago.

Sources: Search Engine Journal — ChatGPT Search Is Citing Fewer Sites · Search Engine Journal — How AI Is Changing Lead Generation

What we found scanning 7 Melbourne financial planning firms

We ran AI visibility scans across 7 financial planning and advisory firms in Melbourne and regional Victoria between March and April 2026, testing each practice's appearance across ChatGPT, Perplexity, Gemini, Copilot, and Google AI Overviews on category discovery queries. These are queries a cold prospect types when they don't already know the firm's name.

Five of the seven had zero visibility on competitive category queries. Three were absent across every query type including direct brand searches. One of the firms with zero competitive visibility manages more than $100 million in assets and wrote over 100 Statements of Advice in its first year of operation. Another scored well on suburb-level queries, but when tested on its stated primary specialisation (SMSF advice), it didn't appear on a single platform.

A strong website doesn't change this. Established credentials don't change it. The signals AI platforms use to decide who to name (schema markup, entity records, third-party corroboration) are almost entirely disconnected from the signals most practices invest in.

What to do right now: Right-click your website and select "View Page Source." Search the source code for "schema.org". If nothing appears, AI platforms cannot read your practice type, location, AFSL number, or specialisations from your website easily. Those are the signals that determine whether you appear in a category query.

Source: LogitRank AI Visibility Scans, March–April 2026

Perplexity also reads your LinkedIn profile

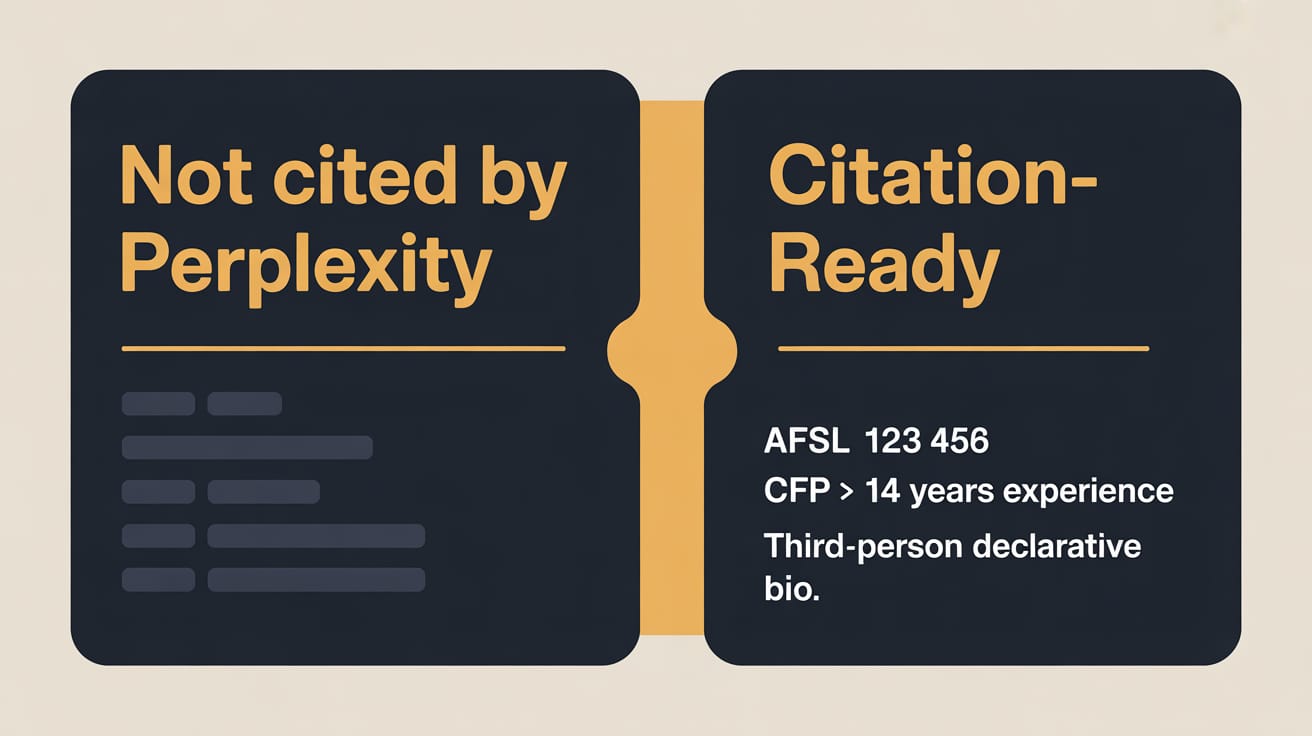

A study of 30 million AI citations across ChatGPT, Gemini, Perplexity, Google AI Overviews, and AI Mode (Peec AI, April 2026) found that Perplexity specifically weights LinkedIn profiles and B2B directories for professional service queries.

For financial planners, Perplexity's decision to name you depends on whether your LinkedIn profile contains your AFSL number, your credentials in structured form, and a bio written in declarative sentences a machine can parse. Most adviser profiles are written to impress peers, not to be read by an algorithm deciding who to recommend.

What to do right now: Open your LinkedIn profile. Is your AFSL number in your About section or Experience? Are your credentials (CFP, FChFP, SMSF Specialist) listed explicitly? If not, rewrite the first two sentences of your About section to: "Jane Smith is a fee-only financial planner based in South Yarra, licensed under AFSL [number], specialising in [specialisation]." Save. That's Perplexity's entry point.

LinkedIn Articles are cited by AI at 5.8× the rate of regular posts

A study of 1.8 million social citations across 250+ brands (higoodie.com, October 2025–February 2026) found that LinkedIn Articles generate AI citations at 5.8 times the rate of feed posts. Articles have stable, crawlable URLs and extractable long-form text. Feed posts don't. AI models can parse one and not the other.

If you're already posting to LinkedIn, you're generating a fraction of the citation return that same content could produce. The research doesn't need to change. The format does.

What to do this week: Take your next LinkedIn post draft and publish it as a LinkedIn Article instead. Add a one-sentence heading to each main point. The AI citation surface you build is roughly six times larger than a feed post would generate.

The pattern across all four findings is the same: AI platforms build answers from structured signals, and the practices already producing those signals are compounding their lead as the citation pool shrinks. The firms that aren't there yet are being replaced in the answer by a competitor who got there first.